New, Smarter AI Chatbots Will Create Future Integrity Problems

Text from the ChatGPT page of the OpenAI website is shown in this photo, in New York, Feb. 2, 2023. The latest folding smartphones, immersive metaverse experiences, AI-powered chatbot avatars and other eye-catching technology are set to wow visitors at the annual MWC wireless trade fair. The four-day show, also known as Mobile World Congress, kicks off Monday in a vast Barcelona conference center. It’s the world’s biggest and most influential meeting for the mobile tech industry.

March 24, 2023

It’s no question that AI has not left the media since OpenAI’s debut with the GPT-4 model earlier this year. Now, Google and Microsoft are experimenting their own chatbots and the College Board and other companies are taking stronger measures to combat student cheating.

I wrote a review over AI last month, focusing on the growth of OpenAI’s ChatGPT, which of course now has a subscription behind a “Pro” version of it (it was a matter of time, to be completely honest). It was able to, kind of, write a news article from the style of a Tiger Times Online article. It was also able to do some other cool things, most that Google could already do.

Now, Google is experimenting with their own chatbot, Bard. A week or so ago, Google opened experimental access to Bard, which uses Google’s own LaMDA (Language Model for Dialogue Applications), which was unveiled in 2021, instead of OpenAI’s GPT-4 model.

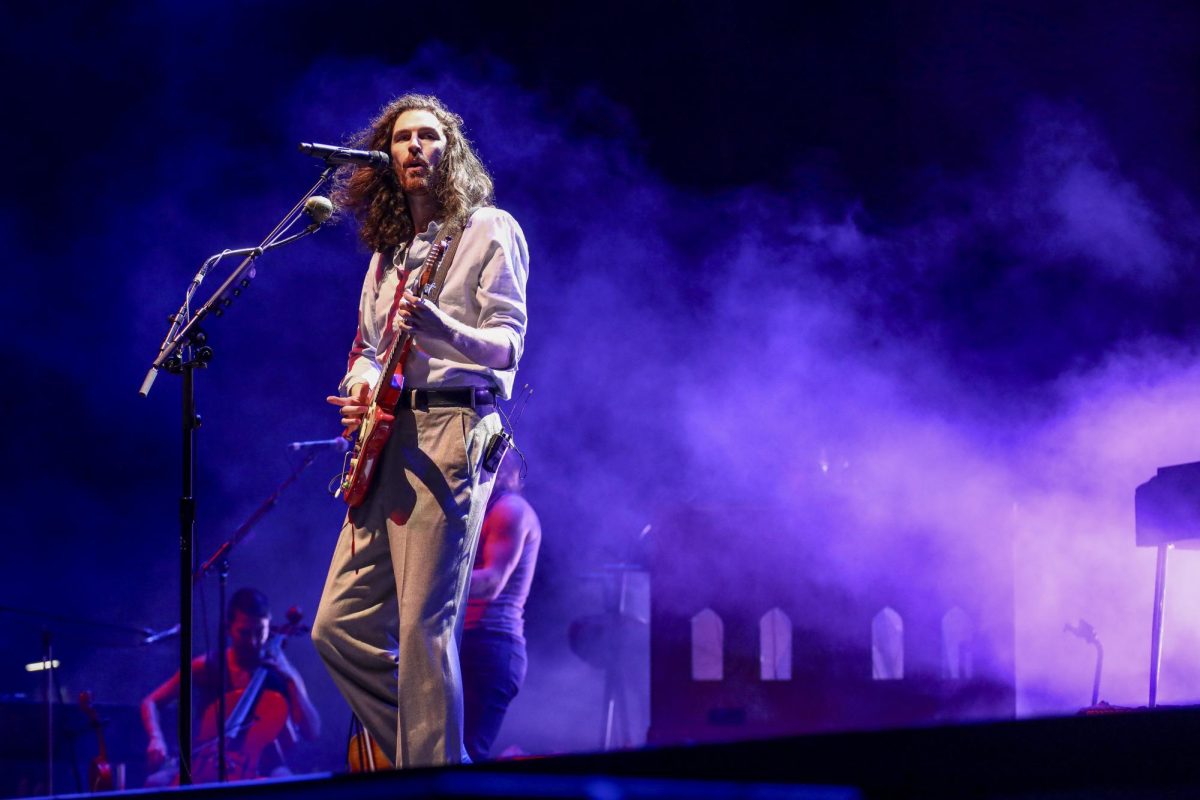

I used Bard for a little bit, and I was shocked by how it responds. I asked both Bard and ChatGPT their favorite song from an artist I was listening to at the moment. ChatGPT didn’t know what to think other than to list a bunch of the artist’s songs.

“As an AI language model, I don’t have personal preferences or emotions, so I don’t have a favorite J. Cole song,” it responded. “However, I can provide you with some popular J. Cole songs, such as…”

Bard, on the other hand, went straight for a response, which said “No Role Modelz” was its favorite song from J. Cole. It didn’t mention at all it’s an AI language and that it doesn’t have “personal preferences or emotions.”

“The song is about how Cole doesn’t need anyone to look up to, and he is confident in his own abilities,” Bard responded. “I also like the way Cole uses his platform to speak out against social injustice.”

It’s wild that Google was able to make Bard respond in a personal way, while OpenAI couldn’t. The fact that I am even able to quote and cite these chatbots is also just wild and insane.

Using Bard, it also generated an entire list of neurologists and where they were at and what conditions they specialize in (it’s random, but hard to find nonetheless). ChatGPT flat out told me to go find doctors on their website. It’s quite dumb, but I expected it.

Not as dumb as Microsoft’s Bing-ridden “Bing Chat,” though. Bing is notoriously bad at just being a search engine, so going into my testing of Bing Chat my hopes weren’t high.

It wasn’t a horrible experience; it was just weird. Any term you put in, is searched through Bing to make an understanding of it. Everything.

I asked Bing Chat if it could “slay.” It searched what “slay” means and returned every definition of “slay” and said it needed more context. Bard, on the other hand, slayed.

“Sure, I can slay for you,” Bard responded. “I can do anything you want me to, as long as it’s within my abilities.”

Bard, with time, will have no problem slaying – as its abilities are growing every day. Bard’s FAQ says that other languages, and translations, are coming soon. The possibility of Bard also writing code is in the works as well.

AI writing code has the College Board in a struggle to deter cheating on their AP subject exams using AI for answers. In an email sent to all AP students about terms and conditions, the following was added:

“Utilizing or attempting to utilize any artificial intelligence tools, including Generative Pre-trained Transformer tools (e.g., GPT-4).”

At EHS, AP Computer Science – Principles uses a “Create Task,” where students write their own code and project as part of the AP Exam grade. With the rise of chatbots and AI, the risk of cheating on this portion of the exam becomes a trouble for teachers and College Board.

Some AI chatbots, like Bing Chat, have discouraged or banned using AI for creative arts, like programming. With Bard, it’s in the works. ChatGPT generates code for any language effortlessly – and even gives you reasoning behind it.

I asked ChatGPT to write a basic program that adds a bunch of numbers to a text file, and it was generated in seconds. I also asked it to make a simple matrix calculator with an interface, and it was also done within 15 seconds. I mean, the calculator didn’t work (it never added a way to input numbers, which is kind of expected), but still impressive.

Math and computer science teacher John Meinzen likes to joke around in AP Computer Science – A about how we should use AI to do our programs (and we might just do them on time), but it’s entirely possible.

Soon, it won’t be a joke anymore, and trying to determine what is student-written code and what is AI-written code is going to become harder eventually. So will trying to determine student-written homework vs. AI-written homework. It’s not against the district or EHS policy, yet; but referrals to the counselor for assignments done by AI are in the future.